Building Research Podcast Studio with Claude — March 14, 2026

Repository: https://github.com/adrianhensler/podcast-generator

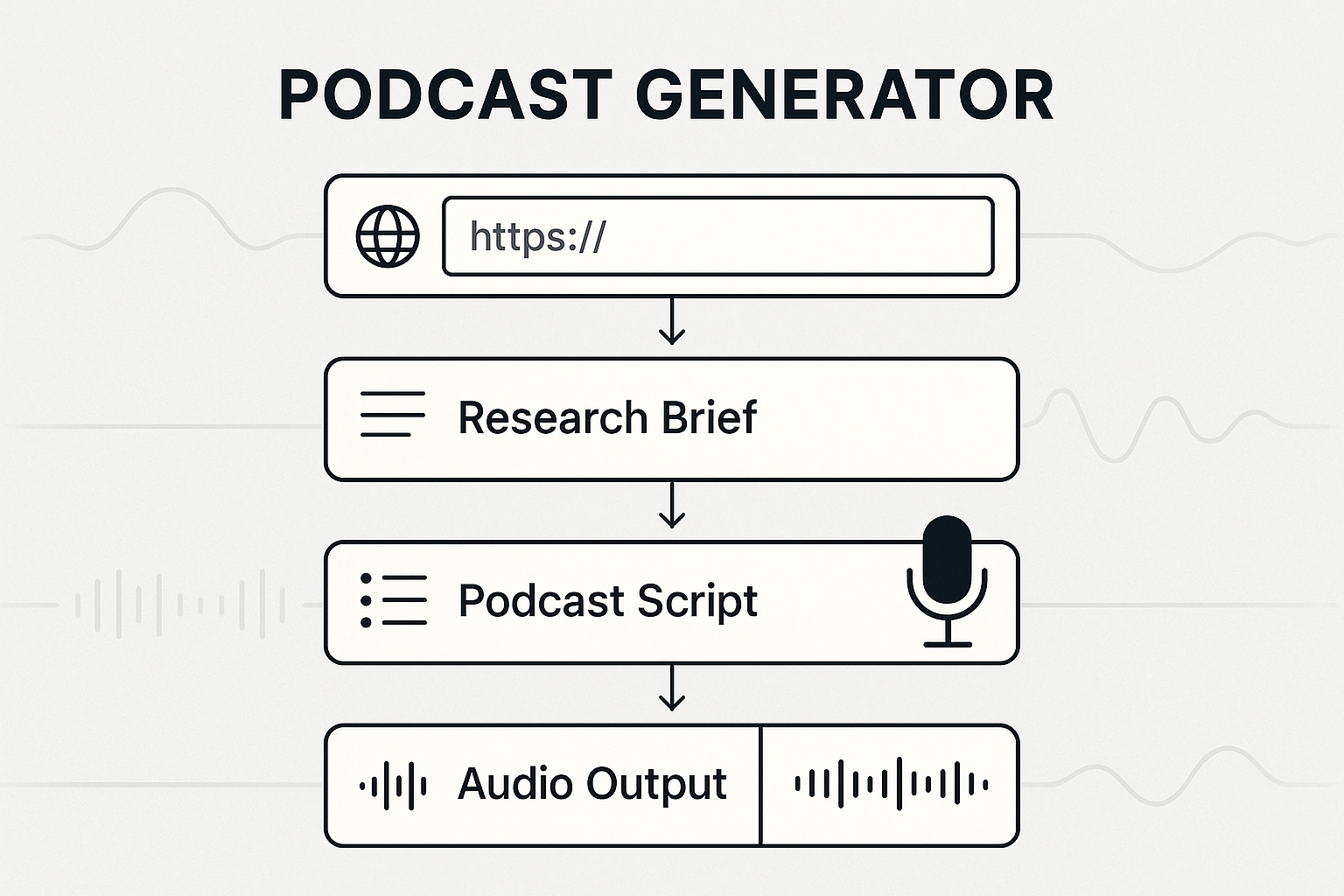

Today I built out the streaming and UX layer for Research Podcast Studio, a web app that turns any article URL into a researched podcast episode. Starting from a working but rough MVP (it could generate a brief, a script, and render audio, based on a skill built for Claude Code CLI), the goal was to make the experience actually pleasant: token-by-token streaming output, plain-English revision prompts, and live inline status so the user knows what’s happening at every step.

This is an account of what got built, what broke, and how it got fixed.

What the App Does

The pipeline has three stages. First, it ingests the source URL — scraping the content directly, with an optional Tavily web search step to pull supplementary context for thin articles. Second, it runs a two-pass LLM process to generate a podcast script: a fast “outline” call produces structured JSON (hook, key questions, talking points, risks), then a slower “expand” call turns that into a full spoken-language script targeting a word count. Third, a TTS renderer concatenates voice-acted audio for Host A and Host B and produces an MP3.

The stack is FastAPI + SQLite on the backend, with HTMX and vanilla JS on the frontend. The whole project came in under 4,000 lines of code.

The Plan: Streaming, Revision Prompts, Inline Status

The session started with a detailed spec to address three friction points in the existing UX:

- Brief and script generation showed a spinner, then a sudden full-page reload — no sense of progress during what can be a 30-second operation.

- There was no way to ask for revisions after seeing the brief or script — you’d have to start over.

- Status indicators were at the top of the page and disconnected from the content.

The solution was Server-Sent Events (SSE) streaming: instead of waiting for the full brief or script and then dumping it on the page, the LLM output would stream token-by-token into a live textarea as it was generated. Four SSE endpoints were added to a new stream.py router for streaming brief generation, streaming script expansion, and revision prompts for both.

The backend grew a new llm_stream() async generator in llm_client.py that streams tokens via httpx SSE and yields a sentinel {"done": true, "log": ...} at the end with cost and timing metadata. New intermediate status values were added to the pipeline: brief_pending → brief_streaming → brief_ready, and similarly for scripts.

The frontend JavaScript was substantially rewritten to handle the streaming state machine and new status transitions.

The First Crash: max_tokens and Thinking Tokens

After deploying, the first test run failed silently. Looking at the logs, the problem was clear: tokens=654+2048 — the brief had hit max_tokens exactly. The qwen3.5 model being used streams its <think> reasoning chain first, before writing a single word of actual output. Those thinking tokens count against max_tokens in streaming mode, and at 2048 they consumed the entire budget. The <think> block was never closed, so the cleanup code that stripped thinking from the textarea never fired — leaving the user staring at raw <think> garbage.

Let me analyze...

Three fixes went in:

_strip_thinkingwas hardened to handle truncated<think>blocks, synthetically closing them so the content could be stripped even if the model got cut off mid-thought.final_contentwas changed to always fire at stream end regardless of whether thinking was detected, replacing the textarea content with clean output in all cases.max_tokensfor the brief stream was increased from 2048 to 8192, giving the model room to think and write.

The Infinite Loop Bug

The next problem was more expensive. A report of “it seems hung — kill the server, it’s looping — thanks for the $$$ token spend” pointed to something serious. Examining the logs showed the pattern:

GET /stream/brief ← stream 1 opens, LLM call 1 starts

PUT .../research_brief ← HTMX auto-save fires, returns 422

GET /projects/... ← page reload

GET /stream/brief ← stream 2 opens, LLM call 2 starts

GET /projects/... ← another reload

GET /stream/brief ← LLM call 3 starts

Two bugs were compounding each other. The original streaming code used the browser’s native EventSource API. EventSource has automatic reconnect baked into the browser spec — when the server closes the SSE connection (as it does normally after sending done), the browser immediately reconnects. Since the project status was still brief_streaming on reconnect, the server started a new LLM call. Each completion triggered another reconnect, and the loop ran until the server was killed.

The second bug was that HTMX’s auto-save on textarea blur was sending application/x-www-form-urlencoded, but the artifact save endpoint expected JSON — producing a 422 error that cascaded into a page reload, which opened yet another SSE connection.

The fix on the SSE side was to replace EventSource entirely with fetch + ReadableStream. Unlike EventSource, fetch is a one-shot operation — no reconnect, no loop. It’s also better suited here because LLM generation is a single expensive operation rather than a persistent event feed, and it supports POST (which the revision endpoints needed anyway). The artifact endpoint was fixed to accept both content types.

A server-side guard was also added: if /stream/brief is hit while a generation is already in progress, it returns a {"type": "wait"} event instead of starting a new LLM call, and the client polls until the existing generation finishes.

Surfacing the Thinking to the User

With the loop fixed, a subtler UX issue remained. The model spends 15–30 seconds on its reasoning chain before writing a single word of actual content. From the user’s perspective this looked like a hang — the textarea was empty and nothing was moving.

The thinking tokens were already being suppressed server-side (streamed into the cost log but not forwarded to the browser). The fix was to show them. When a thinking token arrives, the server sends {"type": "thinking"} and the client renders a small amber-bordered collapsible box below the status indicator, streaming the model’s reasoning in real-time in monospace. When the </think> marker arrives, the reasoning box disappears, the status updates to “Writing brief…”, and content starts flowing into the main textarea.

This transformed the experience from “is it hung?” to “the model is working” — you can watch it think through the problem before it starts writing.

The Loop Returns (Briefly)

During further testing the infinite loop came back in a slightly different form. The server-side guard — designed to prevent a second LLM call when a page reload hit /stream/brief during generation — was too aggressive. It would return wait and tell the client to poll until brief_ready, but if the original LLM call had died (say, due to a server restart), the status stayed brief_streaming forever. The client polled forever. The project was stuck.

The fix was simpler: instead of a server-side guard, the client was taught to recognize when it lands on a project page with brief_streaming status (i.e., on a page reload mid-generation) and default to polling rather than immediately opening a new stream. The single active generation continues; if the page is reloaded, any tab just watches and waits.

The Full Pipeline Working

After those fixes, a clean smoke test ran through the full pipeline: URL ingestion, research brief streaming (with live thinking display), two-pass script generation, and TTS audio render. The result was a playable MP3 and a cost breakdown table showing token counts and spend per stage, with a toggle button to inspect the model’s saved reasoning chain for any stage.

The app was pushed to GitHub at adrianhensler/podcast-generator, with a screenshot and a link to an example MP3 in the README.

What’s Next

The session ended with a discussion of what the MVP still needed. The pipeline works end-to-end, but a few gaps remain before handing it to anyone else: a retry button for projects stuck in error state, confirmation that the audio player is visible on the done page, and the Google Fonts CDN failing silently in offline environments (font files should be served locally). The revision prompts are wired up but haven’t been exercised heavily — that’s the next area to test.

The whole thing — ingestion, SSE streaming, thinking token surfacing, two-pass script generation, TTS rendering, cost tracking — fits in under 4,000 lines. Not bad for a day’s work.